AARP Hearing Center

CLOSE

Search

Popular Searches

Suggested Links

Leaving AARP.org Website

You are now leaving AARP.org and going to a website that is not operated by AARP. A different privacy policy and terms of service will apply.

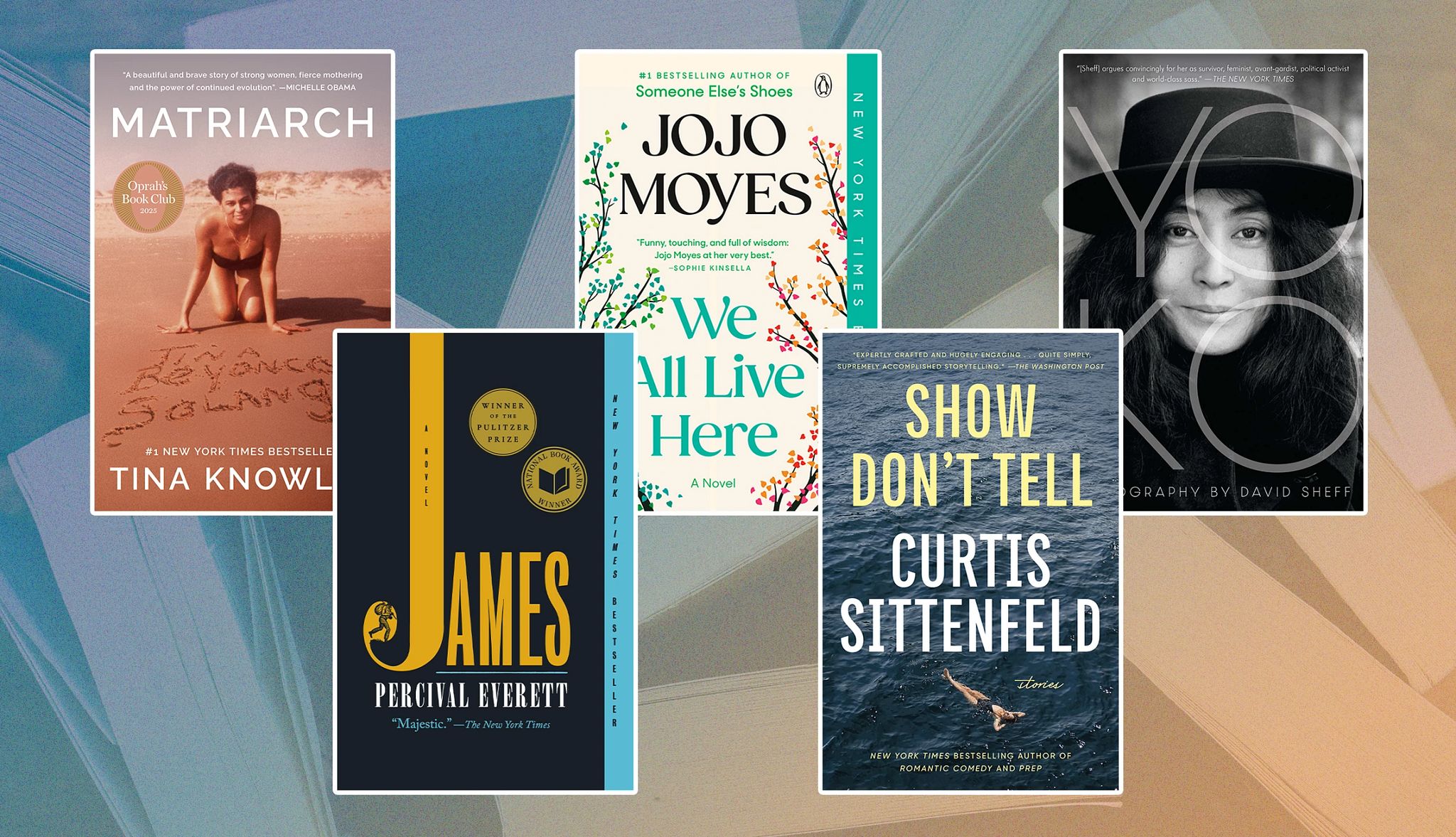

Books

The latest releases, author interviews and more great reads

Explore hundreds of AARP benefits, including a wide range of discounts, programs and services.

More Great Reads

AARP Bookstore

AARP publishes books on a range of issues, from health, food, and caregiving to technology, money, and work.

Plus, AARP members get 40% off specific titles when purchased through the publisher.

AARP in Your State

Find AARP offices in your State and News, Events and Programs affecting retirement, health care and more.