AARP Hearing Center

When you wake up in the middle of the night feeling overwhelmed because of a recent death of a loved one, your new shrink could be an artificial intelligence (AI) chatbot.

It offers advice and suggests techniques to improve your mood. And it’s almost as if you're texting with a human therapist.

Pouring your heart out to an empathetic AI-enabled app or textbot doesn’t replicate lying on a couch to confide in a human psychotherapist or even calling a crisis line. But with proper guardrails, some mental health professionals see a supporting role for AI, at least for certain people under certain situations.

“I don’t think [AIs] are ever going to replace therapy or the role that a therapist can play,” says C. Vaile Wright, senior director of the office of health care innovation at the American Psychological Association in Washington, D.C. “But I think they can fill a need … reminding somebody to engage in coping skills if they’re feeling lonely or sad or stressed.”

AI could help with therapist shortage

In 2021 in the U.S., nearly 1 in 5 adults sought help for a mental health problem, but more than a quarter of them felt they didn’t get the help they needed, according to a federal Substance Abuse and Mental Health Services Administration annual survey. About 15 percent of adults 50 and older sought those services and 1 in 7 of the 15 percent thought they didn’t receive what they needed.

Globally, the World Health Organization (WHO) estimated in 2019 that about 14.6 percent of all adults 20 and older were living with mental disorders, roughly equivalent to the percentage of adults 50 to 69. About 13 percent of adults 70 and older had mental health problems.

The pandemic caused anxiety and depressive disorders to increase by more than a quarter worldwide, according to WHO. Most people with diagnosed mental health conditions, even before the 2020 surge in need, are never treated. Others, who see few services offered in their countries or a stigma attached to seeking them, choose not to attempt treatment.

Learn more

Senior Planet from AARP has free online classes to help you discover more about artificial intelligence.

A March survey of 3,400 U.S. adults from CVS Health and The Harris Poll in Chicago showed that 95 percent of people age 65 and older believe that society should take mental health and illness more seriously, says Taft Parsons III, chief psychiatric officer at Woonsocket, Rhode Island-based CVS Health. But erasing the stigma that older adults in this country often feel about getting treatment will only increase the need for therapists.

“Artificial intelligence may be leveraged to help patients manage stressful situations or support primary care providers treating mild illnesses, which may take some pressure off the health care system,” he says.

ChatGPT won’t replace Freud — yet

Relying on a digital therapist may not mean spilling your guts to the groundbreaking AIs dominating headlines. Google’s Bard, the new Microsoft Bing and most notably Open AI’s ChatGPT, whose launch last fall prompted a tsunami of interest in all things artificial intelligence, are called “generative AIs.”

They’ve been trained using vast amounts of human-generated data and can create new material from what’s been input, but they’re not necessarily grounded in clinical support. While ChatGPT and its ilk can help plan your kid’s wedding, write complaint letters or generate computer code, crafting its data into your personal psychiatrist or psychotherapist may not be one of its strengths, at least at the moment.

— C. Vaile Wright, American Psychological Association

Nicholas C. Jacobson, a Dartmouth assistant professor of biomedical data science and psychiatry in Lebanon, New Hampshire, has been exploring both generative and rules-based scripted AI bots for about 3½ years. He sees potential benefits and dangers to each.

“The gist of what we’ve found with pre-scripted works is they’re kind of clunky,” he says. On the other hand, “there’s a lot less that can go off the rails with them. … And they can provide access to interventions that a lot of people wouldn’t otherwise be able to access, particularly in a short amount of time.”

Jacobson says he’s “very excited and very scared” about what people want to do with generative AI, especially bots likely to be introduced soon — a feeling echoed Tuesday when more than 350 executives, researchers and engineers working in AI released a statement from the nonprofit Center for AI Safety raising red flags about the technology.

Generative AI bots can supply useful advice to someone crying out for help. But because these bots can be easily confused, they also can spit out misleading, biased and dangerous information or spout guidance that sounds plausible but is wrong. That could make mental health problems worse in vulnerable people.

‘Rigorous oversight’ is needed

How you can reach out for human help

If you or a loved one is considering self-harm, go to your nearest crisis center or hospital or call 911.

The 988 Suicide & Crisis Lifeline, formerly known as the National Suicide Prevention Lifeline, is the federal government’s free 24-hour hotline. The nonprofit Crisis Text Line also has 24/7 counselors. Both use trained volunteers nationwide, are confidential and can be reached in ways most convenient to you:

- Dial or text 988. A phone call to 988 offers interpreters in more than 240 languages.

- Call 800-273-TALK (8255), a toll-free phone number, to reach the same services as 988.

- For hearing- impaired users with a TTY phone, call 711 and then 988.

- Text HOME to 741741, the Crisis Text Line.

- In WhatsApp, message 443-SUP-PORT.

- Go to crisistextline.org on your laptop, choose the Chat With Us button and stay on the website.

“The only way that this field should move forward — and I’m afraid will likely not move forward in this way — is carefully,” Jacobson says.

The World Health Organization’s prescription is “rigorous oversight” of AI health technologies, according to a statement the United Nations’ agency issued in mid-May.

“While WHO is enthusiastic about the appropriate use of technologies, including [large language model (LLM) tools] to support health care professionals, patients, researchers and scientists, there is concern that caution, which would normally be exercised for any new technology, is not being exercised consistently with LLMs.”

The hundreds working with AI technology who signed this week’s statement say vigilance should be a global priority. Wright at the American Psychological Association raises similar concerns:

“The problem with this space is it’s completely unregulated,” she says. “There’s no oversight body ensuring that these AI products are safe or effective.”

More From AARP

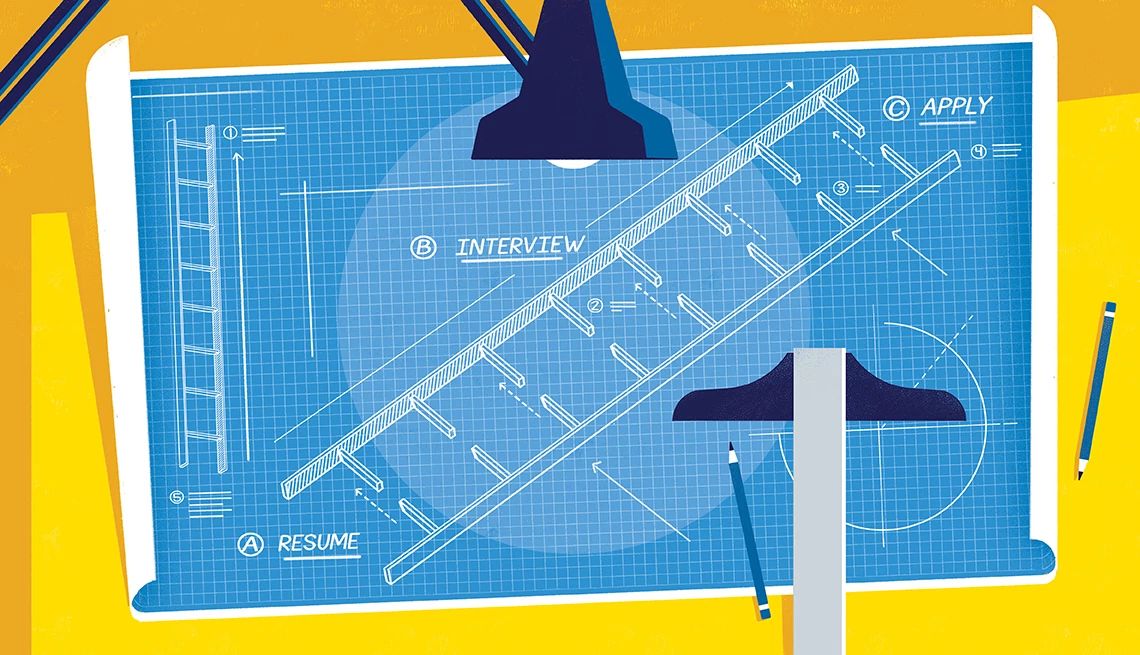

5 Ways Tech Tools Can Help You Get Hired After 50

ChatGPT and other A.I. programs can help with your resume, cover letter, and job search

6 Innovations to Help People Live Better as They Age

Safety, independence are possible by leaning into tech